Best AI 3D Model Texture Generator for Faster Texture Workflows

- This guide covers how AI texture generation works, what criteria actually matter for production use, a closer look at Neural4D, and practical workflows by use case.

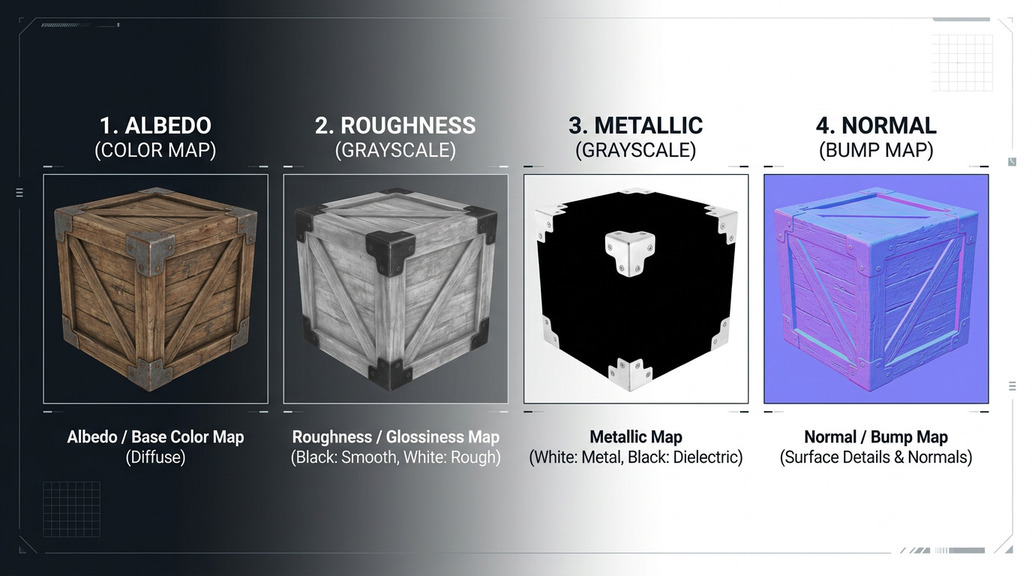

- An AI 3D model texture generator automatically produces PBR map sets (albedo, roughness, metallic, normal) from a mesh and a reference image or text prompt, replacing hours of manual UV painting.

- Not all tools deliver relightable output. The most common production failure is baked lighting in the albedo channel, which breaks under dynamic in-engine lighting.

- Neural4D AI Texture uses an uploaded 3D model as a reference to guide how a provided 2D image is textured, producing a clean, relightable PBR set built on high-quality triangle topology.

If you have spent time waiting on manual UV unwraps and hand-painted maps, you already know how much time disappears before a single asset reaches your engine. The AI 3D model texture generator category has grown fast because studios, indie developers, and product teams hit the same bottleneck: geometry can move fast, but texturing slows approval, iteration, and delivery. This guide shows what separates preview-grade output from relightable PBR textures, where Neural4D fits, and which workflow choices save the most production time.

Part 1: What Is an AI 3D Model Texture Generator?

An AI 3D model texture generator is a tool that produces surface maps for a 3D mesh automatically, using machine learning rather than manual painting. The output typically includes a full PBR (Physically Based Rendering) map set: albedo, roughness, metallic, and normal maps. Some tools also generate ambient occlusion and emissive channels.

The core difference from traditional texture baking is that AI tools can infer surface detail and material behavior from visual references or text prompts, without requiring the artist to paint every detail by hand. The mesh geometry shapes the projection; the AI fills in the material logic.

The PBR foundation. Most modern game engines and real-time renderers expect PBR maps. If you want to go deeper on what those maps do and why they matter, this overview of PBR texturing walks through each channel and its role in realistic material rendering.

Text-driven vs. reference-driven texturing. Some tools generate textures from a text prompt alone. Others use an uploaded reference image or existing textured model to transfer a visual style onto a new mesh. Both approaches have practical uses depending on whether you need stylistic consistency or creative exploration.

Part 2: Why the Market Is Shifting to AI Texturing

- The global 3D animation and modeling market was valued at approximately $17.7 billion in 2023 and is projected to reach $31.1 billion by 2030 (CAGR ~8.4%), driven partly by demand for faster asset pipelines.

- In game development surveys, texturing and UV work consistently rank among the top 3 most time-consuming manual tasks for 3D artists.

- Real-time rendering adoption in film VFX, product visualization, and e-commerce has pushed demand for engine-ready PBR assets well beyond what traditional production pipelines can supply at scale.

- AI-assisted texturing tools saw adoption among indie studios grow significantly between 2023 and 2025 as accessible entry points lowered the skill barrier for small teams.

The pressure is straightforward: content volume requirements are increasing faster than team sizes. A single open-world game may need tens of thousands of unique textured assets. E-commerce platforms want 3D product visuals for every SKU. Architectural visualizers are expected to turn around furnished interiors in days, not weeks. Manual texturing at that scale is simply not viable.

AI texture generation does not replace art direction. It handles the repetitive technical labor so artists can focus on the decisions that actually require creative judgment.

Want to see AI texturing in action?

Neural4D AI Texture applies reference style textures to your 3D models automatically, producing relightable PBR outputs ready for any engine.

Part 3: How AI Texture Generation Works with Neural4D

Understanding the pipeline helps you evaluate any tool’s output claims more accurately. Below is how the process works in practice, using Neural4D AI Texture as the example workflow.

→

② Input 2D Image

→

③ Generate

→

④ Export

Step 1. Upload 3D Reference Model

You start by uploading a 3D mesh to Neural4D AI Texture. This model serves as the reference model — it provides the geometric context that guides how the AI applies textures. The system reads its vertex normals, surface curvature, and topology to understand material transition boundaries — for example, where a fabric sleeve meets a metal buckle. High-quality triangle topology with clean UV islands gives the AI the clearest structural reference to work from.

Step 2. Input 2D Image

You then provide a 2D image. Neural4D’s AI encodes this 2D image and generates the corresponding texture maps with reference to the 3D model uploaded in Step 1 — that reference model helps define the texture language the AI should follow when producing the final surface result.

Step 3. Generate

The AI then produces the required PBR channels based on that reference relationship, generating each map independently: albedo, roughness, metallic, and normal maps. Good outputs maintain spatial consistency across UV seams, which is one of the harder problems in this domain. Neural4D is built around the Direct3D-S2 architecture, which uses Spatial Sparse Attention (SSA) to process volumetric geometry data efficiently — this gives it a stronger foundation for consistent coverage on back-facing geometry, undercuts, and interior-facing surfaces. The output is a relightable albedo with no baked-in environmental lighting, which is critical for dynamic in-engine lighting scenarios.

Step 4. Export

Maps are exported as standard image files (PNG, EXR) alongside the mesh in formats such as .glb, .fbx, .obj, or .usdz. Neural4D separates the albedo from any pre-baked lighting, ensuring you get a pure albedo channel that behaves correctly under dynamic lighting in-engine. The exported package is engine-ready: no additional baking or manual painting steps required.

The baked lighting problem. One of the more common quality issues in AI texture tools is lighting baked into the albedo map. This happens when the model is trained on photographic data without separating illumination from surface color. The result looks fine in screenshots but breaks under real-time relighting. Neural4D explicitly avoids this: the output PBR set is relightable by design. Look for tools that mention relightable or pure albedo output when evaluating for production use.

The mesh itself, when generated through Neural4D’s 3D Studio, uses high-quality triangle topology with automatic retopology and UV generation handled before the texturing step. This means the surface the AI Texture system is working with is already clean geometry, which directly affects projection quality. If you are generating meshes elsewhere and only need the texturing step, the AI Texture tool also works on uploaded meshes from external sources.

That integrated setup helps Neural4D avoid two common failure modes in the category: slot-machine output that changes too much between runs, and texture sets that look good in a thumbnail but break once you relight them in-engine. The broader platform positioning also draws authority from the team’s Nanjing University, DreamTech, Oxford, and Fudan background, which explains why the product messaging leans on algorithm design and production constraints rather than generic AI art claims.

Part 4: What to Look for in an AI Texture Tool

Not every tool that calls itself an AI 3D model texture generator delivers production-usable output. These are the criteria that actually matter when you are building or evaluating a workflow.

PBR map completeness. At minimum, a production-ready set needs albedo, roughness, metallic, and normal maps. Tools that only output a diffuse color map are useful for previsualization but will require additional work before engine import.

Topology compatibility. Texture projection quality is only as good as the mesh it wraps. If you are generating meshes with the same tool, check that the geometry uses high-quality triangle topology without degenerate faces or overlapping UV islands. Broken topology leads to visible seams and stretching regardless of how good the texture maps are.

Style reference support. For studios with established visual styles, the ability to use a reference model or image to drive texture generation is a significant workflow advantage. It maintains visual consistency across an asset library without requiring per-asset manual intervention.

Export format coverage. Your textures need to arrive in the right format. Confirm the tool exports to formats your pipeline actually uses: .glb for web and AR, .fbx for game engines, .usdz for Apple ecosystem, .obj for maximum compatibility.

Speed at scale. For individual assets, speed matters less. For pipelines processing dozens or hundreds of assets, generation time per asset compounds quickly. Tools with efficient inference architectures make a practical difference when you are working at volume.

Deterministic output. Consistency matters in production. A tool that produces different results every run makes QA harder and increases iteration cycles. Look for tools that support seeded generation or show stable output behavior across repeated runs on the same input.

Part 5: Practical Workflows by Use Case

Game Asset Pipeline

For indie developers and small studios, the typical workflow is: generate base mesh from image or text, run AI texturing with a style reference matching the game’s visual language, export as .glb or .fbx, import into Unreal or Unity. The main efficiency gain is that the PBR maps are engine-ready without additional baking or painting steps. Building 3D characters for games requires particular attention to topology around deformation zones, so checking edge flow on AI-generated meshes before rigging is worth the extra minute.

Product Visualization

E-commerce and product design teams often need high-fidelity material representations: brushed metal, matte plastic, woven fabric. AI texture generation with a photo reference of the actual material produces PBR maps that hold up under studio and environment lighting rigs. The pure albedo output is particularly important here since product renderers use physically accurate lighting models.

Rapid Prototyping for VFX and Arch-Viz

For concept validation stages, speed matters more than final fidelity. AI 3D model texture generators let teams get textured hero props and environment elements into a scene quickly for director review, with the understanding that final assets may go through additional polish. The workflow compresses the time between a design direction decision and a visible in-scene result.

For teams exploring what the platform can produce across different asset types and prompt styles, these 10 Neural4D prompts to try for 3D assets provide a useful starting reference across categories.

Ready to build a faster texture workflow?

Neural4D’s full 3D Studio gives you mesh generation, AI Texture, and multi-format export in one pipeline. No manual UV work, no separate baking step. If you need automation or volume planning, review the API options and pricing details before rollout.

Part 6: FAQ

Choosing the right AI 3D model texture generator comes down to the quality of PBR output, how well the tool handles topology compatibility, and whether the export fits your pipeline without additional manual steps. For teams that need reference-driven style consistency across an asset library, integrated systems where mesh generation and texturing share the same spatial understanding tend to produce the most predictable results. Neural4D’s AI Texture feature is built on that integration, and it is worth testing against your current workflow to see where the time savings land for your specific asset types.