Best Free Alternatives to Hitem3D 2026

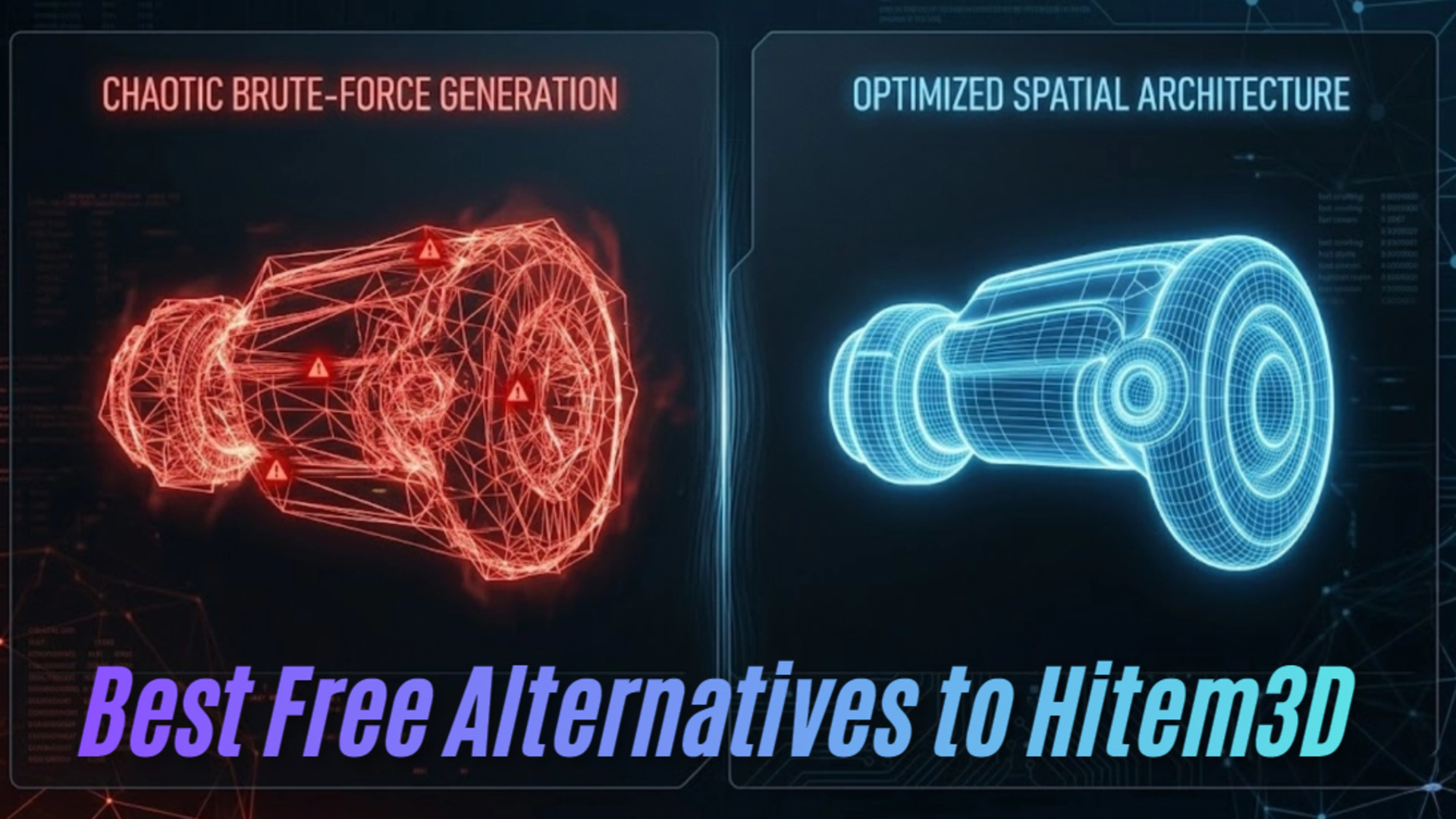

⚡ Quick Summary: Finding a reliable Hitem3D alternative means moving past brute-force density. Engines that output million-polygon files force you into hours of manual decimation just to prevent slicer and game engine crashes. This comprehensive guide exposes the technical flaws behind heavy latency models and probabilistic AI, highlighting how modern spatial architecture delivers 90-second, watertight, production-ready meshes without the prohibitive costs or closed ecosystems.

Generating a usable 3D model requires more than a visually appealing preview in a web browser. The true value of a generated asset depends entirely on how fast it enters your game engine, animation pipeline, or slicing software. Hitem3D popularized ultra-high-resolution AI generation, but it relies on an outdated, brute-force methodology. It prioritizes maximum vertex density over optimal, engine-ready topology.

The standard user experience is frustrating. You push a low-resolution image into the queue. You wait around three minutes for processing. The system spits out a 1.5 million polygon `.obj` file. You import it into Blender, and your viewport freezes instantly. The underlying geometry is filled with non-manifold edges, stretched faces, and overlapping vertices. You spend the next three hours running decimation modifiers and manually patching holes before you can even think about rigging or UV mapping.

This pipeline is broken. Computational overhead should not be offloaded to the user. A modern generative engine must output deterministic, clean assets immediately. If you are searching for a reliable Hitem3D alternative, you need a system that fundamentally changes how the geometry is calculated. We will analyze the technical architecture of the current market leaders and expose why you need a structural upgrade to your 3D workflow.

Table of Contents

- 🔹 Part 1: The Trap of Brute-Force Density

- 🔹 Part 2: Neural4D (The Direct3D-S2 Powerhouse)

- 🔹 Part 3: Meshy and Tripo (The Slot-Machine Problem)

- 🔹 Part 4: Rodin Hyper3D (The Latency Bottleneck)

- 🔹 Part 5: Pipeline Integration & Performance Benchmarks

- 🔹 Part 6: Conclusion – Deploy Your Assets Faster

- 🔹 Hitem3D Alternative FAQ: Troubleshooting AI Generation

Part 1: The Trap of Brute-Force Density

There is a persistent industry myth that higher poly counts equal better models. That is rarely true for real-time applications, mobile games, or physical manufacturing. A hyper-dense mesh kills performance in web viewers, AR environments, and desktop slicers by maxing out rendering batches and computational memory. Tools relying on older volumetric rendering techniques attempt to guess depth by clustering millions of microscopic triangles together.

This creates the illusion of detail, but the reality is a structural nightmare. When an algorithm lacks native volumetric logic, it guesses the obscured geometry. This results in hollow cavities, intersecting faces, and isolated vertices floating inside the main mesh.

Independent developers and small studios cannot afford this computational waste. If a tool gives you a massive file for a simple background prop, it is creating work, not saving it. You need a free alternative that calculates accurate volume and outputs a clean shell tailored for your specific performance budget.

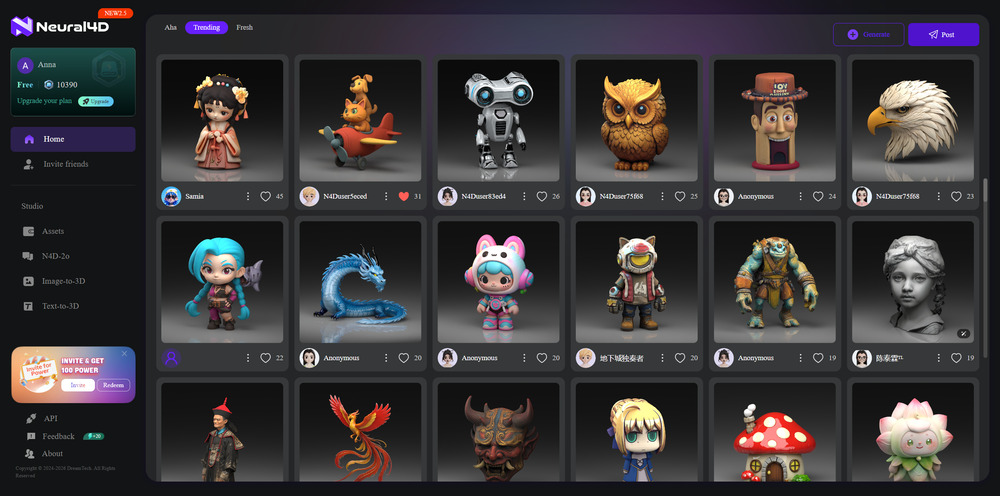

Part 2: Neural4D (The Direct3D-S2 Powerhouse)

We built Neural4D to solve a specific engineering failure: getting functional 3D assets into an engine without intermediate conversion steps. We discarded the probabilistic diffusion models and the brute-force density approach. We engineered the Direct3D-S2 architecture.

This engine utilizes Spatial Sparse Attention (SSA). To put it simply for non-technical users: instead of calculating every possible pixel in a massive 3D grid, the SSA system acts like a smart sensor. It only maps the solid boundary edges of an object and completely ignores the empty air around it. This drops the computational overhead dramatically.

Because the engine isn’t wasting processing power rendering empty space, Neural4D achieves 90-second inference while outputting a clean, quad-dominant structure. You get a lightweight, optimized mesh that performs flawlessly in real-time environments.

We also solved the lighting pollution problem. The engine features a pure Albedo/PBR workflow. When you select “Generate PBR Textures” in the studio, the algorithm separates the base color from the structural depth. It outputs mathematically accurate Normal, Roughness, and Metallic maps alongside your geometry. The mesh drops directly into your rendering pipeline, completely relightable.

You get the base mesh ready to use. No manual retopology. No baked-in shadows. This is what defines production-ready 3D AI.

🔍 Mathematical Watertightness for Production

For industrial design, hardware prototyping, and 3D printing, visual fidelity means nothing if the geometry fails in the real world. A mesh with a single non-manifold edge will cause a modern slicing software to miscalculate toolpaths. This results in missing layers, weak infill, or a failed print.

The Direct3D-S2 engine does not estimate surface depth; it processes the full volume to ensure strict manifold geometry. Every export is mathematically watertight. The walls have actual, calculable thickness. There are no microscopic holes hidden within complex curves, and no floating geometry inside the main body.

This allows you to bypass secondary repair software entirely. If you need to convert image to STL file formats, Neural4D provides a direct pipeline. You upload your reference image, wait for the SSA generation, export the file, and push it straight to your 3D printer’s slicing environment. It slices instantly. You hit print, and the machine executes without error.

Stop Fixing Broken Topology

Experience 90-second inference and quad-dominant outputs. Build your assets, not your frustration.

Part 3: Meshy and Tripo (The Slot-Machine Problem)

When searching for a faster workflow, many users turn to cloud-based generators that promise rapid outputs. These platforms optimize for speed over structural integrity. They operate on probabilistic diffusion models. We call this the slot-machine problem.

You input a prompt. The engine generates a model. The result is completely random. Sometimes the topology is acceptable. Most of the time, it suffers from severe hallucination rates. Extra limbs, fused geometry, and distorted backing are standard outputs. Paying per generation under this system forces you to settle for the first acceptable result because you are afraid to waste credits trying to get a perfect mesh.

Furthermore, these tools suffer from baked-in lighting. They project light and shadow directly onto the albedo texture to mask poor geometry. When you drop these assets into Unity or Unreal Engine, they clash with your dynamic scene lighting. You get dead shadows that do not react to your environment. For a deeper technical breakdown of these specific limitations, you can review our engineering analysis of a Meshy alternative.

These platforms also trap you in closed ecosystems with limited export functionality. They force you to use proprietary web viewers before allowing a standard download. If you are comparing Tripo alternatives, the benchmark must be native pipeline integration, not just visual generation speed in a browser.

Part 4: Rodin Hyper3D (The Latency Bottleneck)

Some platforms attempt to solve the topology issue by routing generation through complex, multi-stage diffusion models. They utilize heavy compute clusters to refine the mesh over an extended period. This approach produces cleaner geometry but introduces massive latency into the production pipeline.

A 3D asset is useless if it takes several minutes to generate a single iteration. Professional 3D artists and technical directors require continuous algorithmic iteration. You need to tweak inputs, hit generate, and review the structural changes instantly. Extended compute times break the creative flow and create a bottleneck in batch inference scenarios.

The cost structure for these heavy compute models is equally prohibitive for small teams or independent creators. High-fidelity generation should not require enterprise-tier hardware costs. To understand how modern architectures bypass this computational heavy lifting, read our structural review of Rodin Hyper3D alternatives.

Part 5: Pipeline Integration & Performance Benchmarks

A fast generator is practically useless if it traps your assets behind a walled garden. Many modern AI tools force you to use their proprietary editing environments and restrict export formats unless you upgrade to an expensive enterprise tier. They offer speed, but they kill your actual production timeline by restricting data mobility.

Neural4D generates the geometry, but more importantly, it lets you take it exactly where you need it immediately. You can export directly to .fbx for your game engine, .glb for real-time web viewing, or .usdz for iOS AR applications. You get the base mesh and PBR textures ready to drop into your existing pipeline. There are no workarounds required. There are no locked formats.

| Feature Benchmark | Hitem3D | Probabilistic AI (Meshy/Tripo) | Neural4D (Direct3D-S2) |

|---|---|---|---|

| Generation Speed | 3 Minutes | Under 1 Minute | 90 Seconds |

| Topology Quality | Extreme Density (Requires Decimation) | Inconsistent / Hallucination Prone | Quad-Dominant / Engine Ready |

| Geometry Type | Often Non-Manifold | Intersecting Faces | Mathematically Watertight |

Stop Fighting Your Slicer and Game Engine

A 3D asset is useless if it requires hours of manual repair. Seamlessly integrate the Direct3D-S2 engine into your workflow. Get mathematically watertight, quad-dominant meshes ready for immediate export and printing.

Part 6: Conclusion – Deploy Your Assets Faster

The era of manually repairing broken AI generation is over. Pushing millions of vertices to compensate for poor algorithmic architecture is a dead end that wastes hardware resources and developer time. Your production pipeline requires speed, deterministic outputs, and native file compatibility. By moving away from bloated, probabilistic generators and integrating the Direct3D-S2 engine, you eliminate the friction between concept and deployment. When evaluating a reliable Hitem3D alternative, the deciding factor is not who generates the most polygons, but who generates the cleanest, most functional geometry ready for immediate integration.

Hitem3D Alternative FAQ: Troubleshooting AI Generation

Is Hitem3D free?

While Hitem3D may offer limited trial credits, the standard model relies on a pay-per-generation or heavy subscription system. Because of the massive computational overhead required to render million-polygon meshes, high-volume generation becomes expensive quickly. A reliable alternative provides a transparent resource system, like 50 Power per week, ensuring you can iterate creatively without fear of burning credits on broken geometry.

Why do high-polygon models from generators crash my 3D printer slicer?

Slicing software calculates the toolpath for every individual triangle face. When an AI generator brute-forces a mesh with millions of unnecessary polygons, it exhausts your system’s RAM, causing a buffer overflow or an application freeze. You need optimized edge flow and clean topology, not maximum density.

What is baked-in lighting and why does it ruin game assets?

Baked-in lighting occurs when an AI paints shadows and highlights directly onto the model’s base color texture (albedo). When you place this model in a game engine like Unreal or Unity, those fake shadows clash with your real directional lights, breaking visual logic. A proper workflow separates the base color from the light reaction.

Is Neural4D a viable commercial alternative?

Free tier outputs (50 Power/week) are marked for testing and prototyping only. Paid subscription tiers grant you full commercial usage rights for all generated geometry and textures, making it a highly cost-effective replacement for restrictive, pay-per-generation models.

How does Spatial Sparse Attention (SSA) speed up generation?

Instead of forcing the computer to calculate every point in a 3D space, SSA only processes the physical boundaries of the object. By ignoring the empty space, it drastically reduces computational load, bringing generation times down to 90 seconds while maintaining watertight structural integrity.