How to Make 3D Human Models – That Actually Work

Most AI-generated humans fail the second you try to use them.

You can generate a 3D human model in 10 seconds. Then you spend 3 hours fixing it. That is the real cost.

If a mesh fails slicing, you waste resin. If it collapses during rigging, you restart animation. If topology breaks deformation, you redo weight painting.

Speed without structural integrity is a liability.

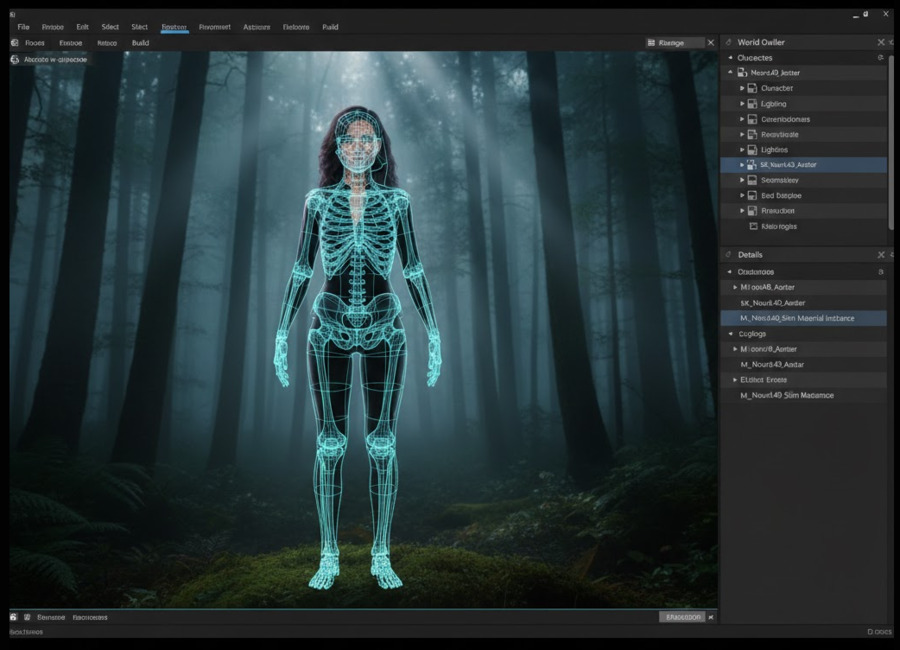

This guide explains how to generate a watertight 3D human model that survives real production environments, and why Neural4D’s Direct3D-S2 fixes the geometry problem at its foundation.

Read also: Generating Production-Ready Full Body Female 3D Models

Part 1. Why Most AI 3D Human Models Fail the Engine Test

Speed is easy. Geometry is hard.

• Non-manifold edges: Holes and flipped normals that break slicers (Cura/Prusa).

• Random triangle density: High-poly noise on flat surfaces, low-poly jaggedness on curves.

• Hollow shells: Surfaces with zero thickness that collapse during scaling.

• Baked-in Lighting: Shadows from the photo are painted onto the texture, making the model unusable in dynamic lighting.Fixing these issues manually involves retopology, UV unwrapping, and texture painting—a process that takes 2–5 hours per asset.

That is not AI acceleration. That is deferred manual labor.

Read also: The Hidden Cost of “Free 3D Car Models”

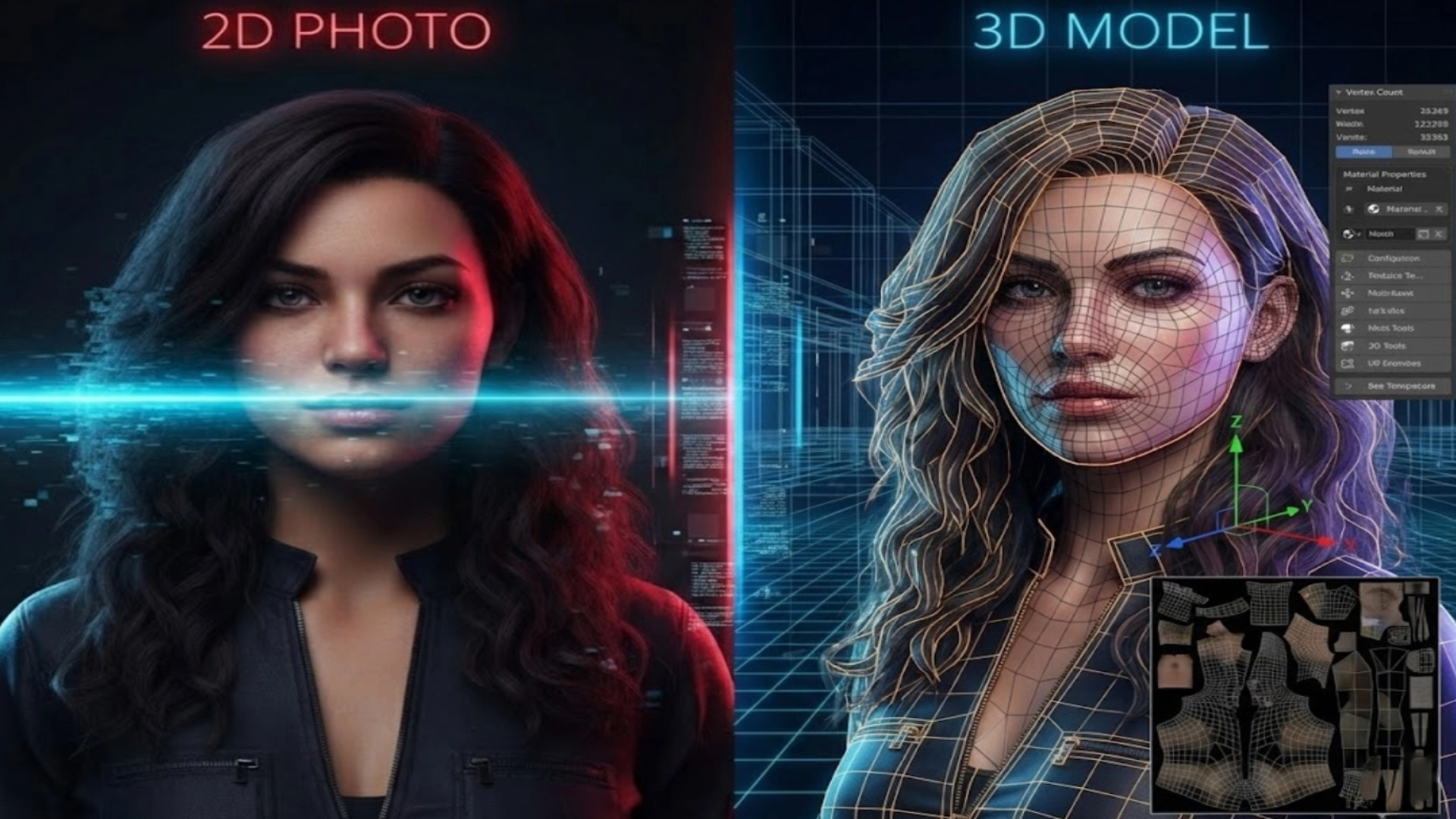

Part 2. From Photo to Watertight 3D Human Model

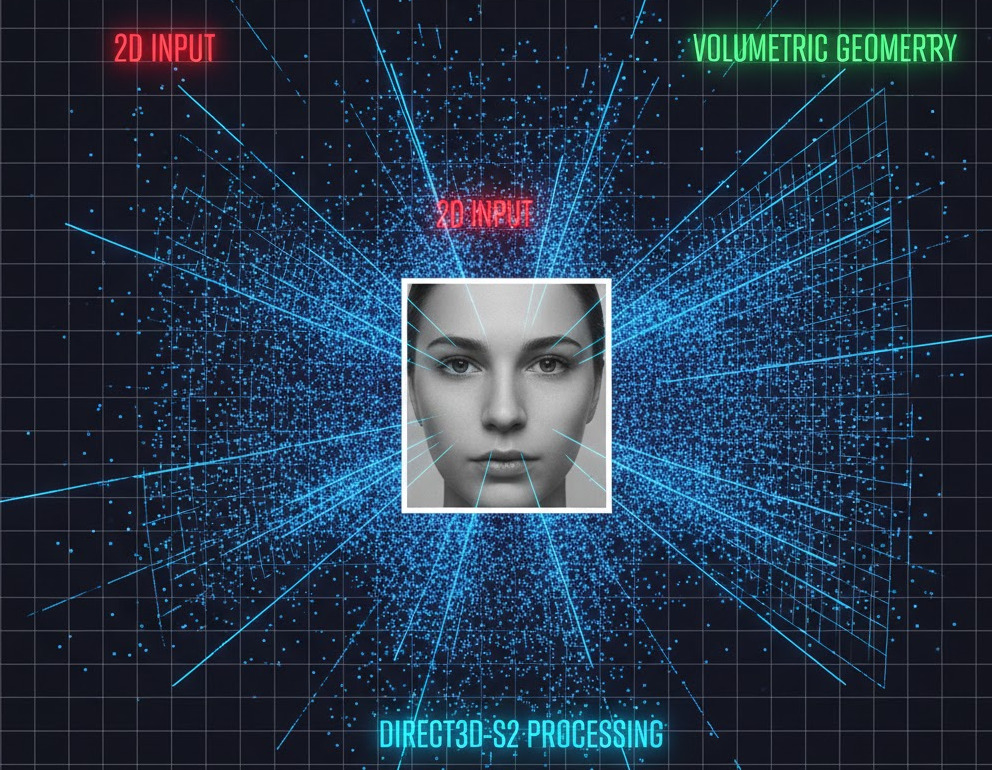

Neural4D approaches the problem differently. Instead of wrapping images onto guessed shapes, our Direct3D-S2 engine generates geometry volumetrically at 2048³ resolution.

The model is constructed as a solid body. Not a hollow surface.

Result: Sharper facial features, cleaner finger separation, and consistent surface thickness without geometric noise.

Want to see the topology yourself?

Generate a Watertight Human Online

*Includes automatic STL export & rigging compatibility

The final mesh is watertight by default. No open edges. No hidden holes. No internal self-intersections. You skip the Blender repair phase entirely.

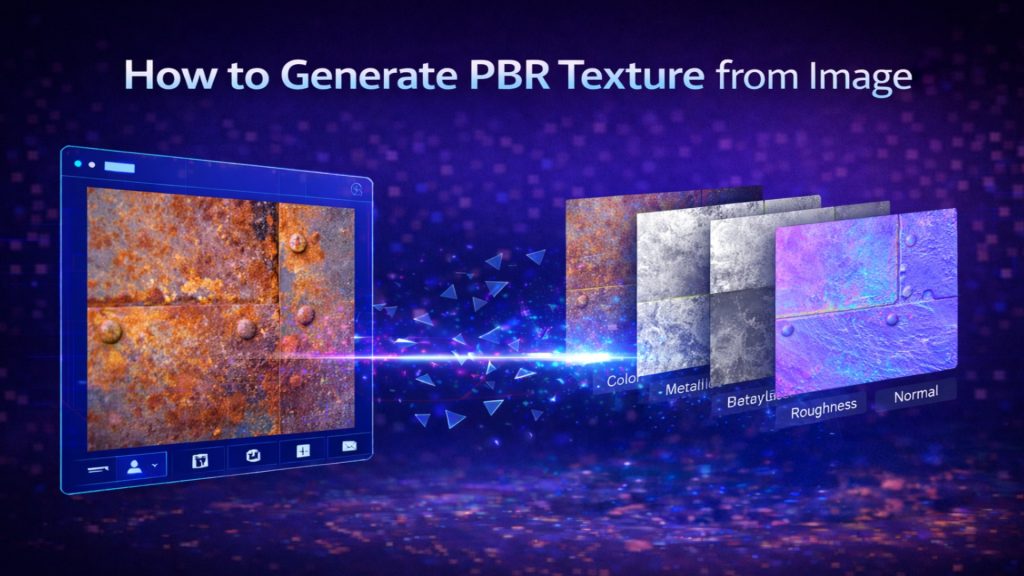

Part 3. Beyond Geometry: Generating PBR Textures (Not Just Photos)

Geometry is only half the battle. If your model looks like a photo pasted onto a statue, it fails in modern game engines.

The problem with basic photogrammetry is Baked Lighting. If your source photo has a shadow under the nose, that shadow becomes part of the skin color. Put that model in a night scene, and the face still looks like it is standing in direct sunlight. It breaks immersion immediately.

Neural4D separates lighting from material. We generate a full PBR (Physically Based Rendering) material set:

- Albedo Map (Diffuse): Pure surface color. We use AI de-lighting to remove shadows and highlights from the source image, giving you a neutral texture that reacts correctly to in-game lights.

- Normal Map: High-frequency details like pores, wrinkles, and fabric weave are baked into a normal map, adding depth without increasing polygon count.

- Roughness Map: Defines how light scatters. The system intelligently assigns high roughness to skin and fabric, and low roughness (higher specularity) to eyes and lips.

Read also: Generate PBR Texture from Image with Neural4D TexTrix

This means you can drop the asset into Unreal Engine 5 or Unity HDRP, and it will respond naturally to your environment lighting.

🔥 3D Printing: Export Without Repair

Watertight geometry matters most at small scale. A 0.3mm surface defect that is invisible on screen can destroy a 28mm miniature print.

Because Direct3D-S2 creates consistent volume continuity, you can export an STL and load it directly into your slicer.

Slicing Strategy: 0% Infill to Solid Parts

Most AI-to-3D models are thin shells. If you print them hollow, they crush. If you print them solid, the internal intersecting geometry confuses the slicer, creating voids.

Neural4D models are boolean-safe solids. For miniatures:

- Wall Thickness: The mesh guarantees a minimum thickness, preventing paper-thin walls that fail to print.

- Manifold Guarantee: No non-manifold edges means your slicer won’t generate repair warnings.

- Support Generation: Clean overhangs under the chin and arms allow for easy support removal (Tree Supports recommended) without pitting the surface.

🔥 Game Development: The Rigging Reality Check

Static meshes are easy. Deforming meshes are hard. The true test of a 3D human model is what happens when you bend the elbow.

The Mixamo Standard

Bad topology collapses volume. If the mesh is a random soup of triangles, bending an arm will make the elbow look like a crushed soda can. This is the #1 reason developers reject AI models.

Neural4D includes Auto-Retopology designed for animation. We align edge loops with natural muscle deformation zones.

The Workflow:

- Export: Download your model as .OBJ (Quads enabled).

- Auto-Rig: Upload directly to Mixamo or ActorCore AccuRig.

- Verify: Apply a Run or Punch animation.

Because the topology follows the flow of the deltoids and knees, the mesh deforms smoothly. No spiking vertices. No volume loss at the joints. You spend less time correcting weight painting and more time animating.

Part 4. Input Engineering: How to Feed the Algorithm

Even the best engine cannot fix bad data. To get the 2048³ quality we promise, you need to follow specific input engineering protocols. Stop feeding the AI garbage.

1. Resolution Matters (Minimum 2K)

Direct3D-S2 calculates depth at a voxel level. If you upload a blurry 512px image, the Sparse Spatial Attention has no edges to latch onto. Use input images of at least 2048×2048 resolution for optimal texture mapping and pore-level detail.

2. Lighting: Flat is King

Avoid dramatic, moody lighting. Strong shadows creates false geometry. The AI might interpret a dark shadow on a cheek as a concave hollow.

Best Practice: Use soft, even lighting (overcast day or softbox). The flatter the light, the more accurate the geometric reconstruction.

3. The Lens Factor (50mm+)

Wide-angle selfies (taken with a phone held close to the face) distort proportions, making the nose look huge and the ears disappear. Neural4D reproduces exactly what it sees.

Best Practice: Step back and zoom in (telephoto), or use a 50mm – 85mm focal length. This preserves correct anatomical proportions.

Part 5. Production Ready, Not Just “Preview Ready”

Fast preview models look impressive in thumbnails but fail in production. Real assets need to survive export, rigging, slicing, scaling, and engine integration.

If your workflow currently includes Repair in Blender, Fix Normals, or Re-UV Map, the generation step failed.

Direct3D-S2 completes the geometry step at generation time. We provide the watertight mesh, the clean topology, and the PBR maps so you can focus on building your game, not fixing our polygons.

Stop Fixing Broken Meshes

Generate watertight 3D humans at 2048³ resolution. Ready for Mixamo and 3D printing.

Part 6. Frequently Asked Questions

Can AI-generated 3D human models be rigged in Mixamo?

Yes, but only if the topology is correct. Most AI generators output triangle soup that breaks when bent. Neural4D uses Auto-Retopology to create clean, quad-based meshes with proper edge flow around joints. This makes them fully compatible with auto-rigging tools like Mixamo and ActorCore AccuRig without manual cleanup.

What is the best input image for 3D generation?

Input engineering is critical. We recommend:

1. Resolution: Minimum 2048×2048 pixels.

2. Lighting: Flat, even lighting (no harsh shadows).

3. Focal Length: 50mm or higher to avoid facial distortion.

Avoid wide-angle selfies, as they distort the nose and jawline, which the AI will interpret as actual geometry.

Are Neural4D models watertight for 3D printing?

Yes. Unlike surface-based photogrammetry, our Direct3D-S2 engine generates a solid volumetric mass. The resulting STL export is manifold (watertight) by default, meaning it has no holes or non-manifold edges. You can slice it immediately in Cura or PrusaSlicer without using mesh repair tools.

Do I own the commercial rights to the generated 3D models?

If you are on a paid subscription, you own full commercial rights (Royalty-Free) to all assets you generate. You can use them in sold games, printed products, or client work. Users on the Free Trial (600 Power) are limited to non-commercial testing and personal use only.